Introduction

REST over HTTP/1.1: text-based, human-readable, ~100ms per call with serialization overhead. gRPC over HTTP/2: binary, multiplexed, streaming, ~5-10ms for the same payload. Numbers vary, but the direction is always the same.

The trade-off is real though. You lose browser compatibility, human-readable debugging, and the entire ecosystem of tools that assume JSON. For public APIs, that is a bad trade. For internal service-to-service calls where you control both ends and performance matters, it is not even close.

This guide builds a gRPC microservice in Go. For protobuf syntax itself, the official proto3 language guide is good -- no point duplicating it here. We focus on when gRPC actually beats REST, streaming patterns (the killer feature), and the operational pain nobody warns you about.

Assumes Go basics and a general understanding of microservices. For Go concurrency, see our Go Concurrency Patterns guide.

gRPC vs REST: When It Actually Matters

Protocol Buffers encode data as compact binary. Payloads roughly 3-10x smaller than JSON, serialization 5-20x faster. At high message volumes -- hundreds of thousands of inter-service calls per second -- that difference shows up in your cloud bill. HTTP/2 multiplexes streams over a single connection, compresses headers, and gives you bidirectional communication. No more opening dozens of TCP connections in parallel.

But none of that matters if your services make 50 calls per second. At that volume, JSON over HTTP/1.1 is fine. The overhead is rounding error. gRPC starts justifying its complexity around the point where serialization shows up in your flame graphs or where you need streaming.

Streaming is the killer feature. Not the performance. Server streaming, client streaming, bidirectional -- all first-class in gRPC. With REST you would be stitching together WebSockets or Server-Sent Events and writing your own framing protocol. With gRPC the proto file defines the stream and the generated code handles the plumbing.

.proto files as a single source of truth is the other big win. Change a field name and the generated code catches it at compile time. Not at 3 AM when a downstream service starts returning garbage.

Where REST Still Wins

Browsers. JSON is readable. You can curl it, paste it into Slack. Binary protobuf requires tooling to decode. REST has Swagger, Postman, caching proxies that understand HTTP natively. gRPC tooling is improving but not there yet.

The honest answer: gRPC for internal service-to-service calls where performance or streaming matters. REST at the API boundary for external consumers. Most teams should run both, not pick one.

Protocol Buffers: Defining Your Schema

An order management service -- creating orders, fetching them, streaming status updates.

syntax = "proto3";

package orders;

option go_package = "github.com/myapp/proto/orders";

// The Order service handles all order-related operationsserviceOrderService {

// Create a new orderrpcCreateOrder(CreateOrderRequest) returns (OrderResponse);

// Get an existing order by IDrpcGetOrder(GetOrderRequest) returns (OrderResponse);

// Stream real-time order status updatesrpcWatchOrderStatus(WatchOrderRequest) returns (streamOrderStatusUpdate);

// Batch create orders via client streamingrpcBatchCreateOrders(streamCreateOrderRequest) returns (BatchOrderResponse);

// Live order processing with bidirectional streamingrpcProcessOrders(streamOrderAction) returns (streamOrderEvent);

}

messageCreateOrderRequest {

string customer_id = 1;

repeatedOrderItem items = 2;

Address shipping_address = 3;

}

messageOrderItem {

string product_id = 1;

int32 quantity = 2;

double unit_price = 3;

}

messageAddress {

string street = 1;

string city = 2;

string state = 3;

string zip_code = 4;

string country = 5;

}

messageOrderResponse {

string order_id = 1;

string customer_id = 2;

repeatedOrderItem items = 3;

OrderStatus status = 4;

double total_amount = 5;

string created_at = 6;

}

enumOrderStatus {

PENDING = 0;

CONFIRMED = 1;

PROCESSING = 2;

SHIPPED = 3;

DELIVERED = 4;

CANCELLED = 5;

}

messageGetOrderRequest {

string order_id = 1;

}

messageWatchOrderRequest {

string order_id = 1;

}

messageOrderStatusUpdate {

string order_id = 1;

OrderStatus previous_status = 2;

OrderStatus current_status = 3;

string updated_at = 4;

string message = 5;

}

messageBatchOrderResponse {

int32 total_created = 1;

int32 total_failed = 2;

repeatedstring order_ids = 3;

}

messageOrderAction {

string order_id = 1;

string action = 2;

}

messageOrderEvent {

string order_id = 1;

string event_type = 2;

string details = 3;

string timestamp = 4;

}Four RPC methods covering all the patterns: unary, server stream, client stream, bidirectional. Field numbers are what the binary encoding uses -- not the names -- so you can rename fields without breaking wire compatibility. repeated means a list.

Generate the Go code:

# Install the protobuf compiler and Go plugins

go install google.golang.org/protobuf/cmd/protoc-gen-go@latest

go install google.golang.org/grpc/cmd/protoc-gen-go-grpc@latest

# Generate Go code from the proto file

protoc --go_out=. --go_opt=paths=source_relative \

--go-grpc_out=. --go-grpc_opt=paths=source_relative \

proto/order_service.protoTwo files come out: order_service.pb.go (message types) and order_service_grpc.pb.go (service interface and client stub). Never edit them.

Implementing a gRPC Server

The generated code gives you an interface. You implement the methods. gRPC handles HTTP/2, serialization, and connection management. Here is how the pieces fit together -- the struct embeds the unimplemented server (forward-compatibility, so adding new RPC methods to your proto later does not break existing server code), CreateOrder validates the request using gRPC status codes (more precise than HTTP status codes -- InvalidArgument, NotFound, PermissionDenied -- and consistent across every language), and the main function is minimal: TCP listener, gRPC server, register, serve.

package main

import (

"context""fmt""log""net""sync""time""github.com/google/uuid"

pb "github.com/myapp/proto/orders""google.golang.org/grpc""google.golang.org/grpc/codes""google.golang.org/grpc/status"

)

type orderServer struct {

pb.UnimplementedOrderServiceServer

mu sync.RWMutex

orders map[string]*pb.OrderResponse

}

funcnewOrderServer() *orderServer {

return &orderServer{

orders: make(map[string]*pb.OrderResponse),

}

}

// CreateOrder handles unary RPC for creating a new orderfunc (s *orderServer) CreateOrder(

ctx context.Context,

req *pb.CreateOrderRequest,

) (*pb.OrderResponse, error) {

// Validate the requestif req.CustomerId == "" {

return nil, status.Error(

codes.InvalidArgument,

"customer_id is required",

)

}

iflen(req.Items) == 0 {

return nil, status.Error(

codes.InvalidArgument,

"at least one item is required",

)

}

// Calculate total amountvar total float64for _, item := range req.Items {

total += item.UnitPrice * float64(item.Quantity)

}

// Create the order

order := &pb.OrderResponse{

OrderId: uuid.New().String(),

CustomerId: req.CustomerId,

Items: req.Items,

Status: pb.OrderStatus_PENDING,

TotalAmount: total,

CreatedAt: time.Now().Format(time.RFC3339),

}

// Store the order

s.mu.Lock()

s.orders[order.OrderId] = order

s.mu.Unlock()

log.Printf("Created order %s for customer %s (total: $%.2f)",

order.OrderId, order.CustomerId, order.TotalAmount)

return order, nil

}

// GetOrder retrieves an order by IDfunc (s *orderServer) GetOrder(

ctx context.Context,

req *pb.GetOrderRequest,

) (*pb.OrderResponse, error) {

s.mu.RLock()

order, exists := s.orders[req.OrderId]

s.mu.RUnlock()

if !exists {

return nil, status.Errorf(

codes.NotFound,

"order %s not found", req.OrderId,

)

}

return order, nil

}

funcmain() {

listener, err := net.Listen("tcp", ":50051")

if err != nil {

log.Fatalf("Failed to listen: %v", err)

}

grpcServer := grpc.NewServer()

pb.RegisterOrderServiceServer(grpcServer, newOrderServer())

log.Println("gRPC server listening on :50051")

if err := grpcServer.Serve(listener); err != nil {

log.Fatalf("Failed to serve: %v", err)

}

}Connection management and protocol negotiation happen under the hood. You never deal with HTTP/2 framing directly.

Building the gRPC Client

Mirror of the server. Nothing new.

package main

import (

"context""log""time"

pb "github.com/myapp/proto/orders""google.golang.org/grpc""google.golang.org/grpc/credentials/insecure"

)

funcmain() {

// Establish a connection to the gRPC server

conn, err := grpc.NewClient(

"localhost:50051",

grpc.WithTransportCredentials(

insecure.NewCredentials(),

),

)

if err != nil {

log.Fatalf("Failed to connect: %v", err)

}

defer conn.Close()

// Create a client from the connection

client := pb.NewOrderServiceClient(conn)

// Set a timeout for the request

ctx, cancel := context.WithTimeout(

context.Background(), 5*time.Second,

)

defercancel()

// Create a new order

order, err := client.CreateOrder(ctx, &pb.CreateOrderRequest{

CustomerId: "cust-42",

Items: []*pb.OrderItem{

{

ProductId: "prod-101",

Quantity: 2,

UnitPrice: 29.99,

},

{

ProductId: "prod-205",

Quantity: 1,

UnitPrice: 49.99,

},

},

ShippingAddress: &pb.Address{

Street: "123 Main St",

City: "Portland",

State: "OR",

ZipCode: "97201",

Country: "US",

},

})

if err != nil {

log.Fatalf("CreateOrder failed: %v", err)

}

log.Printf("Order created: %s (total: $%.2f)",

order.OrderId, order.TotalAmount)

// Retrieve the order we just created

fetched, err := client.GetOrder(ctx, &pb.GetOrderRequest{

OrderId: order.OrderId,

})

if err != nil {

log.Fatalf("GetOrder failed: %v", err)

}

log.Printf("Fetched order: %s | Status: %s | Items: %d",

fetched.OrderId, fetched.Status, len(fetched.Items))

}insecure.NewCredentials() is for local development only. Unencrypted gRPC in a shared Kubernetes cluster means any pod in the namespace can sniff the traffic. context.WithTimeout is not optional -- without it, a hung server holds your goroutine forever.

The call is client.CreateOrder(ctx, &pb.CreateOrderRequest{...}). No URL construction, no HTTP method, no marshaling. Type-safe all the way down. The compiler catches wrong types and missing fields at build time, not at runtime. This is the part that makes gRPC worth the setup cost even if you do not care about performance.

Streaming: Server, Client and Bidirectional

This is what justifies gRPC's existence for most teams. Not the performance benchmarks. Streaming.

Server Streaming

One request in, a stream of messages back. Order status updates, tailing logs, live event feeds.

// WatchOrderStatus streams real-time status updates to the clientfunc (s *orderServer) WatchOrderStatus(

req *pb.WatchOrderRequest,

stream pb.OrderService_WatchOrderStatusServer,

) error {

// Verify the order exists

s.mu.RLock()

order, exists := s.orders[req.OrderId]

s.mu.RUnlock()

if !exists {

return status.Errorf(

codes.NotFound,

"order %s not found", req.OrderId,

)

}

// Simulate order status progressing over time

statuses := []pb.OrderStatus{

pb.OrderStatus_CONFIRMED,

pb.OrderStatus_PROCESSING,

pb.OrderStatus_SHIPPED,

pb.OrderStatus_DELIVERED,

}

previousStatus := order.Status

for _, nextStatus := range statuses {

// Check if the client has disconnectedif err := stream.Context().Err(); err != nil {

log.Printf("Client disconnected: %v", err)

return status.Error(

codes.Canceled, "client disconnected",

)

}

// Simulate processing time

time.Sleep(2 * time.Second)

update := &pb.OrderStatusUpdate{

OrderId: req.OrderId,

PreviousStatus: previousStatus,

CurrentStatus: nextStatus,

UpdatedAt: time.Now().Format(time.RFC3339),

Message: fmt.Sprintf(

"Order moved from %s to %s",

previousStatus, nextStatus,

),

}

if err := stream.Send(update); err != nil {

return err

}

log.Printf("Sent status update: %s -> %s",

previousStatus, nextStatus)

previousStatus = nextStatus

}

return nil

}stream.Send() pushes one message. Function returns, gRPC closes the stream. The stream.Context().Err() check detects client disconnection -- skip it and you waste resources sending updates into the void. This has bitten me in production where a disconnected client left the server looping for minutes.

Client Streaming and Bidirectional Streaming

Client streaming reverses it: client sends a stream, server responds once. Batch uploads, aggregation. Bidirectional is both at once -- independent message streams in each direction.

funcwatchOrder(client pb.OrderServiceClient, orderID string) {

ctx, cancel := context.WithTimeout(

context.Background(), 30*time.Second,

)

defercancel()

// Start the server stream

stream, err := client.WatchOrderStatus(ctx,

&pb.WatchOrderRequest{OrderId: orderID},

)

if err != nil {

log.Fatalf("WatchOrderStatus failed: %v", err)

}

// Read messages from the stream until it closesfor {

update, err := stream.Recv()

if err == io.EOF {

log.Println("Stream completed")

break

}

if err != nil {

log.Fatalf("Error receiving update: %v", err)

}

log.Printf("[%s] %s -> %s: %s",

update.UpdatedAt,

update.PreviousStatus,

update.CurrentStatus,

update.Message,

)

}

}

// processOrders shows bidirectional streamingfuncprocessOrders(client pb.OrderServiceClient) {

ctx, cancel := context.WithTimeout(

context.Background(), 60*time.Second,

)

defercancel()

stream, err := client.ProcessOrders(ctx)

if err != nil {

log.Fatalf("ProcessOrders failed: %v", err)

}

// Send actions in a separate goroutinegofunc() {

actions := []pb.OrderAction{

{OrderId: "order-1", Action: "confirm"},

{OrderId: "order-2", Action: "ship"},

{OrderId: "order-3", Action: "cancel"},

}

for _, action := range actions {

if err := stream.Send(&action); err != nil {

log.Printf("Send error: %v", err)

return

}

time.Sleep(time.Second)

}

stream.CloseSend()

}()

// Receive events from the serverfor {

event, err := stream.Recv()

if err == io.EOF {

break

}

if err != nil {

log.Fatalf("Recv error: %v", err)

}

log.Printf("Event: [%s] %s - %s",

event.OrderId, event.EventType, event.Details)

}

}Notice the goroutine split in the bidirectional example. One goroutine sends, the main goroutine receives. No lock-step ordering. Both directions flow independently. Try doing this with REST. You end up with WebSockets, custom framing, reconnection logic, and a month of debugging edge cases. With gRPC the proto file defines the contract and the generated code handles the plumbing.

Error Handling and Deadlines

Network calls fail in more ways than local function calls. Slow server. Dropped connection mid-response. Partial results before a crash.

gRPC Status Codes

Canonical codes, consistent across every language. The ones you reach for most: InvalidArgument (3), NotFound (5), AlreadyExists (6), PermissionDenied (7). Two codes deserve special attention. DeadlineExceeded (4) -- operation ran out of time. Unavailable (14) -- transient failure, safe to retry. Internal (13) should be rare. When you use it, make sure you are logging enough to debug it.

Deadlines

Every gRPC call needs a deadline. Not "should have." Needs.

Without one, a hung downstream service holds goroutines open until your caller runs out of memory. Deadlines propagate automatically through the call chain. Service A calls B with a 5-second deadline. B calls C. C inherits whatever time remains. No manual bookkeeping.

funcgetOrderWithRetry(

client pb.OrderServiceClient,

orderID string,

maxRetries int,

) (*pb.OrderResponse, error) {

var lastErr error

for attempt := 0; attempt <= maxRetries; attempt++ {

// Each attempt gets its own deadline

ctx, cancel := context.WithTimeout(

context.Background(), 3*time.Second,

)

order, err := client.GetOrder(ctx, &pb.GetOrderRequest{

OrderId: orderID,

})

cancel() // always cancel to avoid context leakif err == nil {

return order, nil

}

lastErr = err

st, ok := status.FromError(err)

if !ok {

// Not a gRPC error, don't retryreturn nil, fmt.Errorf(

"non-gRPC error: %w", err,

)

}

switch st.Code() {

case codes.Unavailable, codes.DeadlineExceeded:

// These are safe to retry

backoff := time.Duration(attempt+1) * 500 * time.Millisecond

log.Printf(

"Attempt %d failed (%s), retrying in %v...",

attempt+1, st.Code(), backoff,

)

time.Sleep(backoff)

continuecase codes.NotFound, codes.InvalidArgument,

codes.PermissionDenied:

// These will not get better with retryingreturn nil, fmt.Errorf(

"permanent error (code %s): %s",

st.Code(), st.Message(),

)

default:

return nil, fmt.Errorf(

"unexpected error (code %s): %s",

st.Code(), st.Message(),

)

}

}

return nil, fmt.Errorf(

"all %d retries exhausted: %w",

maxRetries, lastErr,

)

}Unavailable and DeadlineExceeded are retryable. NotFound and InvalidArgument are not -- retrying will not fix them, it will just hammer the server. Without this separation, every transient failure turns into a self-inflicted denial of service. This is the mistake I see most often in gRPC retry code.

Backoff here is linear for simplicity. Production: add jitter. Otherwise all clients retry at the same instant and you get a thundering herd. The gRPC library also supports declarative retry policies in the service config, so you do not always need to write this by hand.

Service Discovery and Load Balancing

This is where gRPC gets operationally painful.

DNS-Based Discovery

In Kubernetes, DNS-based discovery works out of the box. Each service gets a DNS name. But standard DNS gives you one IP address, and gRPC holds that single connection. All your requests go to one pod. For actual load balancing, you need client-side balancing or a proxy that understands HTTP/2.

Client-Side Load Balancing

gRPC has built-in client-side balancing. Client resolves a service name to multiple addresses, distributes requests.

import (

"google.golang.org/grpc""google.golang.org/grpc/credentials/insecure"

_ "google.golang.org/grpc/balancer/roundrobin"

)

funccreateLoadBalancedClient() (*grpc.ClientConn, error) {

// Use dns:/// scheme for DNS-based discovery// The round_robin policy distributes calls evenly

conn, err := grpc.NewClient(

"dns:///order-service.default.svc.cluster.local:50051",

grpc.WithTransportCredentials(

insecure.NewCredentials(),

),

grpc.WithDefaultServiceConfig(

`{"loadBalancingConfig":[{"round_robin":{}}]}`,

),

)

if err != nil {

return nil, fmt.Errorf(

"failed to create client: %w", err,

)

}

return conn, nil

}dns:/// triggers gRPC's built-in DNS resolver. round_robin distributes calls evenly across resolved addresses. Good enough to start.

gRPC with Envoy Proxy

Envoy understands HTTP/2 natively. It balances at the request level, not the connection level. This matters because gRPC connections are long-lived -- a TCP load balancer routes all requests from one client to one backend. Envoy distributes individual RPCs across backends. You also get request metrics, distributed tracing, circuit breaking, and rate limiting without changing application code.

In Kubernetes, Istio and Linkerd inject Envoy as a sidecar automatically. But running a service mesh to fix gRPC load balancing is a significant operational commitment. Be honest about whether you need it or whether client-side round-robin is enough.

Health Checking

gRPC defines a standard health checking protocol in grpc.health.v1. Implement it and your load balancer knows whether the service is ready for traffic. Minimal setup:

import (

"google.golang.org/grpc""google.golang.org/grpc/health"

healthpb "google.golang.org/grpc/health/grpc_health_v1"

)

funcmain() {

grpcServer := grpc.NewServer()

// Register the order service

orderSvc := newOrderServer()

pb.RegisterOrderServiceServer(grpcServer, orderSvc)

// Register the health service

healthServer := health.NewServer()

healthpb.RegisterHealthServer(grpcServer, healthServer)

// Mark the service as serving

healthServer.SetServingStatus(

"orders.OrderService",

healthpb.HealthCheckResponse_SERVING,

)

// You can update the status dynamically,// e.g., mark as NOT_SERVING during shutdown// healthServer.SetServingStatus(// "orders.OrderService",// healthpb.HealthCheckResponse_NOT_SERVING,// )// ... start the server as before

}Kubernetes queries this endpoint directly using the grpc liveness and readiness probe type. Mark NOT_SERVING during graceful shutdown so existing connections drain before the pod terminates.

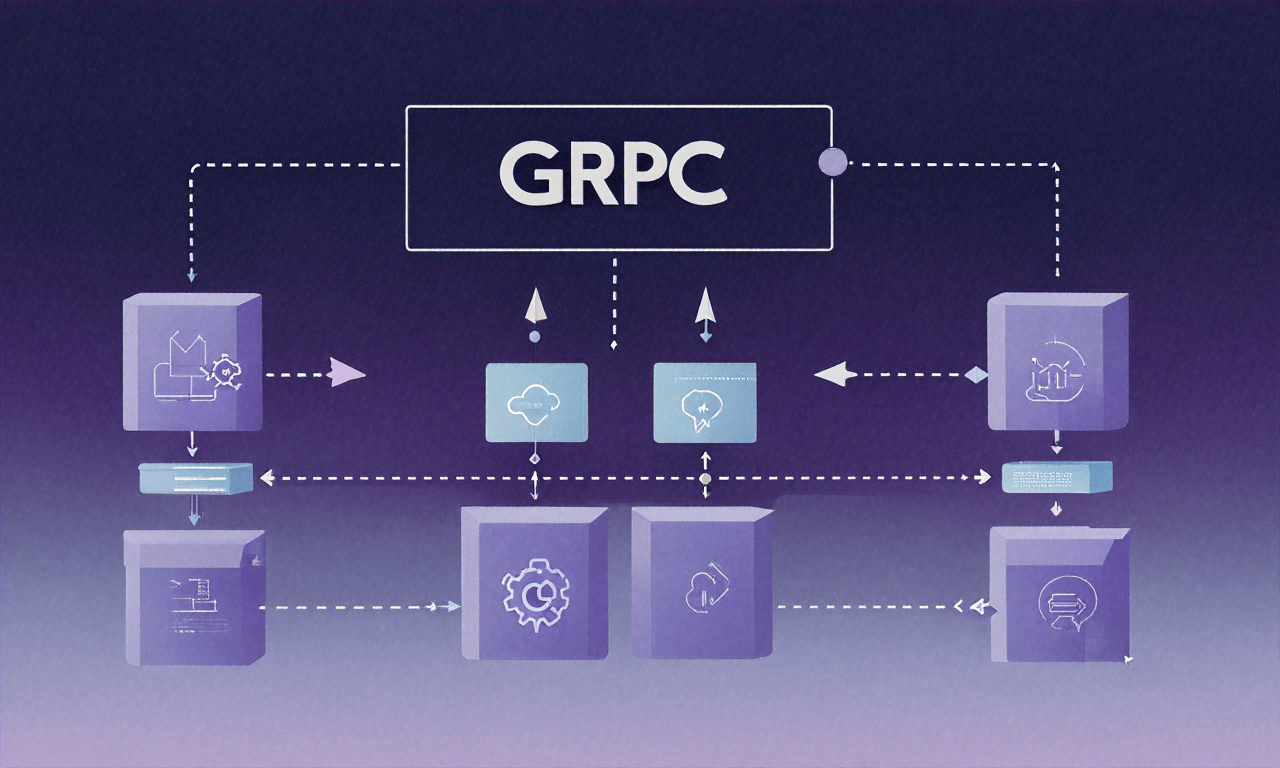

How the Pieces Fit

DNS or a service registry handles discovery. Client-side resolver and balancer distribute calls. Envoy or an API gateway provides TLS termination and observability. Health checks keep the system self-healing. Deadlines prevent one slow service from cascading into total failure. A lot of moving parts for what used to be "make an HTTP request." Worth it at scale. Overkill for five services.

For the Kubernetes side, see our Kubernetes for Developers guide. For local development, the Docker Compose Guide covers the setup.

gRPC between your own services: great. gRPC exposed to the public internet: painful. No browser support without grpc-web, terrible error messages for API consumers, and every debugging tool assumes JSON. Pick your battles.

Set deadlines on every call. Put your proto files in a dedicated repository with CI that auto-generates client libraries on every change. Wire in interceptors for logging, metrics, and tracing before you ship -- retrofitting observability into a running system is painful and it always gets deprioritized until something breaks at 2 AM. A gRPC call without a deadline is a goroutine leak waiting to happen. That is not a best practice. That is a production incident.